Incrementality evidence from controlled experiments calibrates MMM coefficients — replacing uncertain statistical estimates with observed causal truth. Three approaches, and what changes in the model when they work.

Thought Leadership - 8 min read - MASS Analytics

Calibrating MMM with incrementality evidence is about getting closer to the truth instead of trusting what the model produces alone. MMM infers causal effects from patterns in data. Incrementality experiments anchor those inferences to something firmer: directly observed evidence of what advertising caused.

When you combine both properly, you get a measurement system that’s substantially more reliable than either approach alone, and one where each method makes the other more useful over time.

The Two-way Relationship

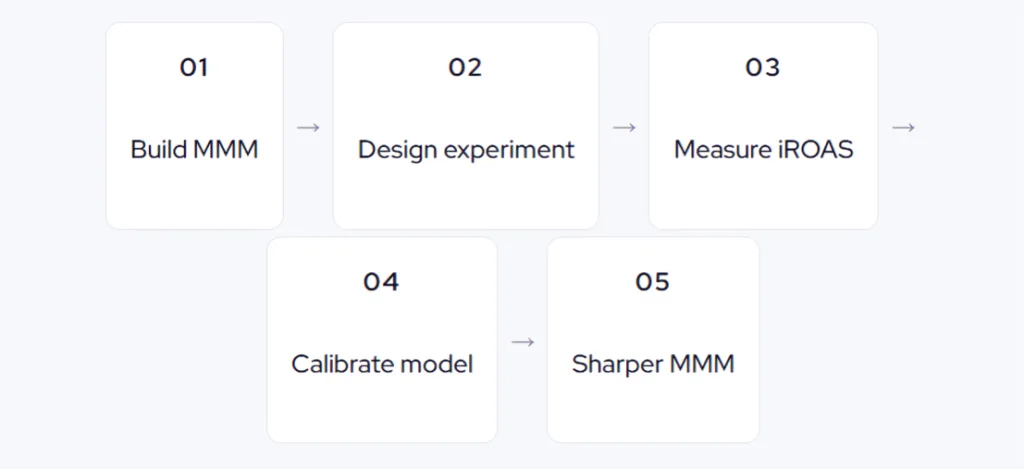

It’s worth being explicit about something that often goes underappreciated. Incrementality experiments calibrate MMM. But MMM also guides better experiments. The model can tell you how many weeks a test needs to run to detect a meaningful signal, which regions provide the best test-control match, and what level of spend variation gives the experiment its best chance of yielding a usable incrementality estimate. Neither is simply a consumer of the other’s outputs as they improve each other.

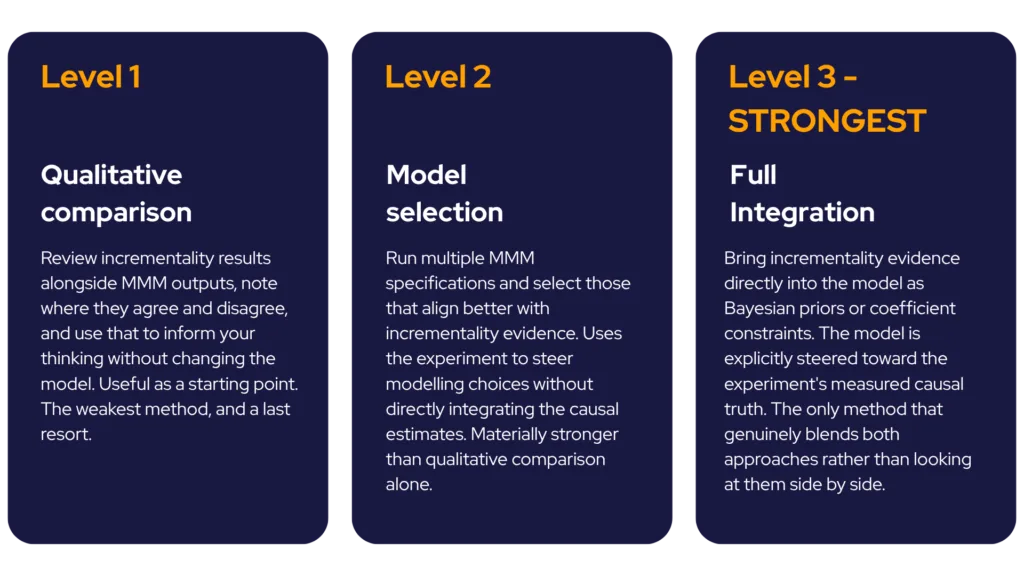

Three Levels of Calibration

Not all calibration is equal. There are three approaches, each with a different level of rigour:

Getting the Comparison Right: KPIs and Carryover

Before you can calibrate, the experiment data and the MMM data must speak the same language. This is where calibration efforts most often break down. If the experiment measures online conversions but your MMM targets total revenue, you need to transform one into the other. If the experiment is daily and the MMM is weekly, you need to aggregate. Mismatched units produce calibration that’s worse than no calibration.

Carryover effects require particular care. Experiments typically measure short-term impact within the test window. MMM accounts for the full effect including carryover: the weeks or months of continued influence after the campaign ends. The experiment’s iROAS will often look like a lower bound compared to the model’s estimate. The right approach is to compare short-term effects like-for-like, not to treat the experiment’s number as the complete picture.

What Changes in the Model When Calibration Works

What changes after incrementality calibration

- Channel contribution corrected to reflect the measured causal incrementality

- ROAS rising to align with the experimentally validated lift

- Optimisation recommendations shifting to account for the calibrated channel

- Model fit improving: better statistical performance, more stable coefficients

- Budget recommendations becoming more defensible to finance and boards

In one D2C client engagement, properly calibrated MMM produced a 26% increase in measured incremental sales contribution, a 24% improvement in measured ROI, and a 33% increase in budget allocation to the tested channel, all from a single well-designed incrementality experiment feeding into a properly integrated model.

When your MMM stops guessing and starts learning from observed incrementality evidence, the whole organisation’s relationship with measurement changes.

Back to Series overview

Previous article: The $1 Billion Incrementality Question

Next article: Which Channels Need Incrementality Testing First?